Top 10 Web Crawling Tools to Scrape the Websites Quickly

Web Crawling is commonly regarded as Web Data Extraction or Web Scraping or Screen Scraping. All terms mentioned are relative to the web crawling concept and process. More distinctively web crawling is available for general public use. The entire Internet is invented to be able to be read by humans. Hence Web Scrapers act as essential handy tools that can load the entire HTML Code into one page for the incorporated question. Let us assume that you want a detailed product description for your designed company product.

All non-programmers can benefit from web crawling at a smaller or larger scale and fulfill their data dreams. A web scraping tool is an automated web crawling technology that works to bridge the gap between mysterious data to the public.

Following are the 10 Best Web Crawlers for your reference. These crawling tools can be used at their fullest potential to gather as much data you need!

Cyotek WebCopy

WebCopy is as illustrative as the name itself is. The web crawling tool is free to allow partial copying or full extraction of data from target websites. The data can be stored within hard disks for offline reference as well. Also, you can use a bot whenever you want to crawl a piece of information. You can also use agent strings, default documents and configure domain aliases. But WebCopy does not include a virtual DOM or parsing JavaScript. If a website does not employ heavy JavaScript to function then WebCopy can’t yield a true copy.

HTTrack

As a website crawler, HTTrack efficiently provides suitable functions and applications to download the entire website to the PC. It supports all versions of Sun Solaris, Unix systems, Linux, Windows, and much more to cover all user types. Furthermore, it is really interesting to know that HTTrack can also mirror one website or a bunch of websites at one time with shared links. This way you can decide the connection numbers to open concurrently whilst downloading the web pages ‘set options’. Furthermore, you can get all files, photos, HTML codes from those mirrored websites.

Also, the Proxy support is at your service 24/7 within HTTrack to maximize its speed. It works as a command-line program. By saying this, HTTrack is preferred for usage even by professional programmers.

Get left

Getleft is a free version of easy-to-do website crawling at our end. It allows simple downloading of an entire website or a web page. After completing the launch action in Getleft you can simply feed the URL and select files to get started right away! It can change the local browsing links and provides exceptional multilingual support. Now Getleft support offers support for almost 14 languages to make your crawling experience worthwhile.

On the other side, there is limited FTP support being offered by the crawling tool mentioned. It will not recursively download the files.

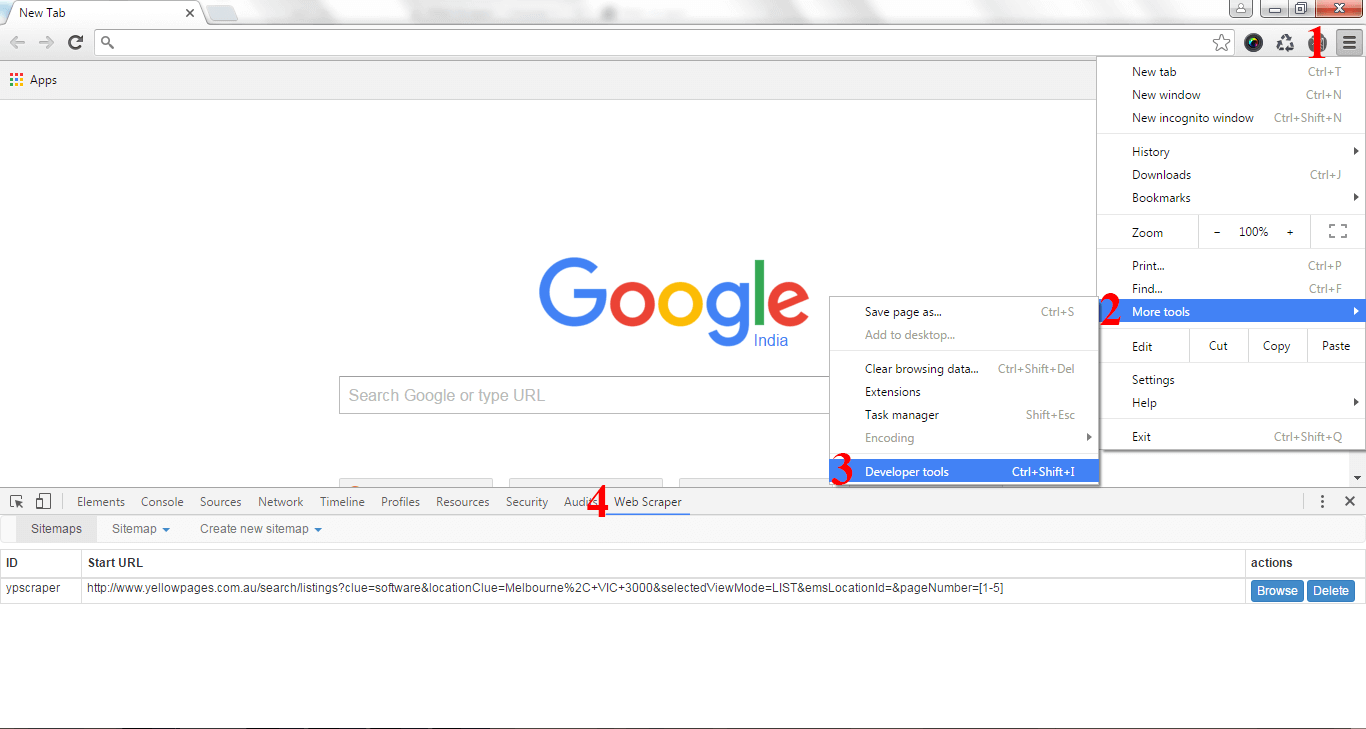

Scraper

This web crawling tool is a chrome extension that offers limited data extraction applications but all very useful for research instructions. The tool caters interests of both beginners and experts. You can go off to copy the data to the clipboard and then store it in the spreadsheets with the help of OAuth. Scraper can auto-generate Xpaths to define URLs for crawling incentives. However, the tool does not include crawling services for free of cost.

OutWit Hub

OutWitHub offers dozens of web data extraction applications. Its features are based upon the simplification of web searches. The web crawler tool can easily browse web pages and store them in a more structured format. OutWit Hub allows the single user interface for scraping even the tiniest to largest amounts of data. OutWit Hub is an excellent web crawling tool to scrape web pages with the browser itself. The tool even implied automated tools for the purpose.

ParseHub

Parsehub is a greater web crawler than any of the mentioned till now. It effectively supports the collection of the seamless amount of data from multiple websites which are using JavaScript, AJAX technology, or Cookies. It uses Machine Learning (ML) technology to read, analyze and interpret to transform web files into the target data. The desktop applications of Parsehub are compatible with Mac OS, Windows, and Linux.

Visual Scraper

VisualScraper is yet another data extraction tool that you can acquire free and without coding knowledge. With a simple user interface, you can get real-time data via web pages and shift the data to CSV, JSON, XML, or SQL file formats. Besides, it provides web scraping services with efficient data delivery services. It enables the users to run their projects at a specific time or repeat the sequence after a few minutes, days, weeks or months, and even a year. Users can easily extract news, latest updates from different forums at any time possible.

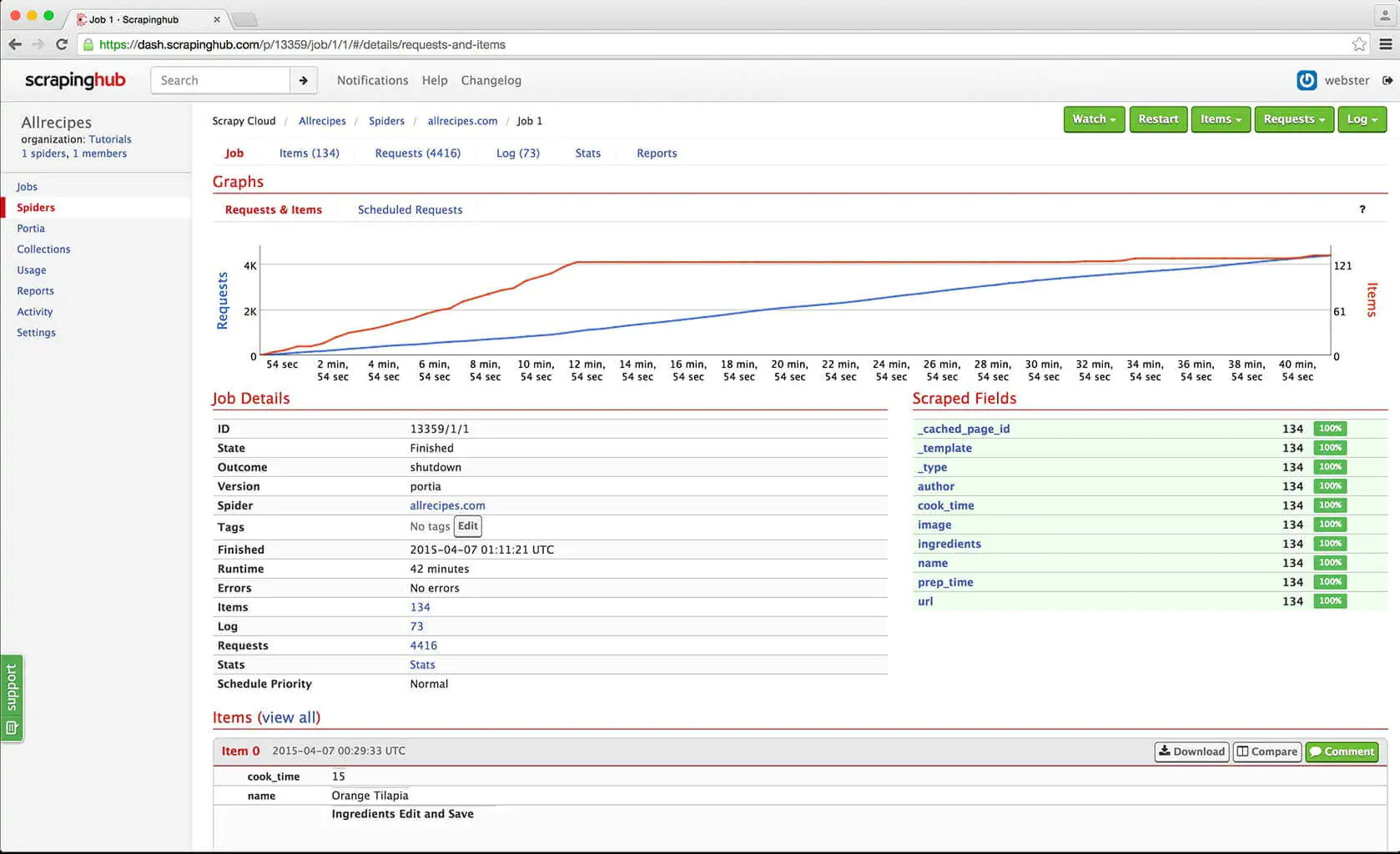

Scrapinghub

Scrapinghub is a cloud-based data extraction tool that allows users to fetch all valuable data with less or no data programming knowledge. Scrapinghub utilizes Crawlers for employing a smart proxy rotator which helps support bypassing bot for protected sites very efficiently and quickly. Furthermore, it enables the users to crawl the multiple IPs and their locations without much pain. Also, it can convert the entire website or webpage into well-organized content. Also, the readily available team of experts can assist in building crawlers that suit your data requirements.

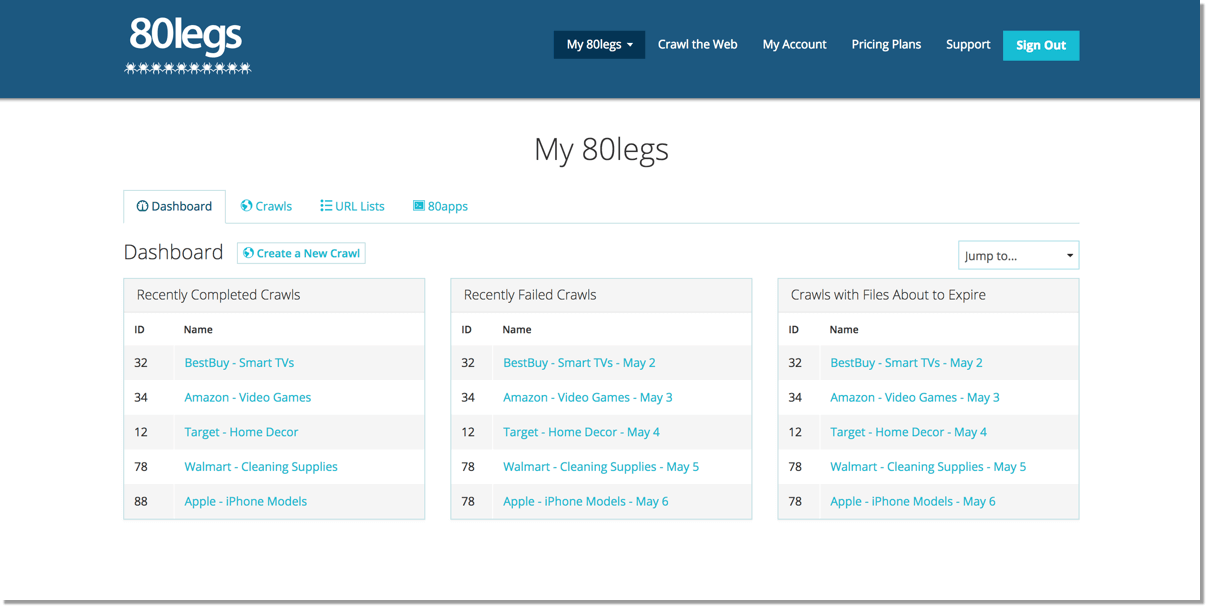

80legs

80legs is the most powerful web crawling tool out there, which can help configure your customized requirements perfectly. It further proves helpful in the case of fetching loads of reliable data with an option of download. 80legs provide applications rich in higher performances for web crawling to match data needs more rapidly.

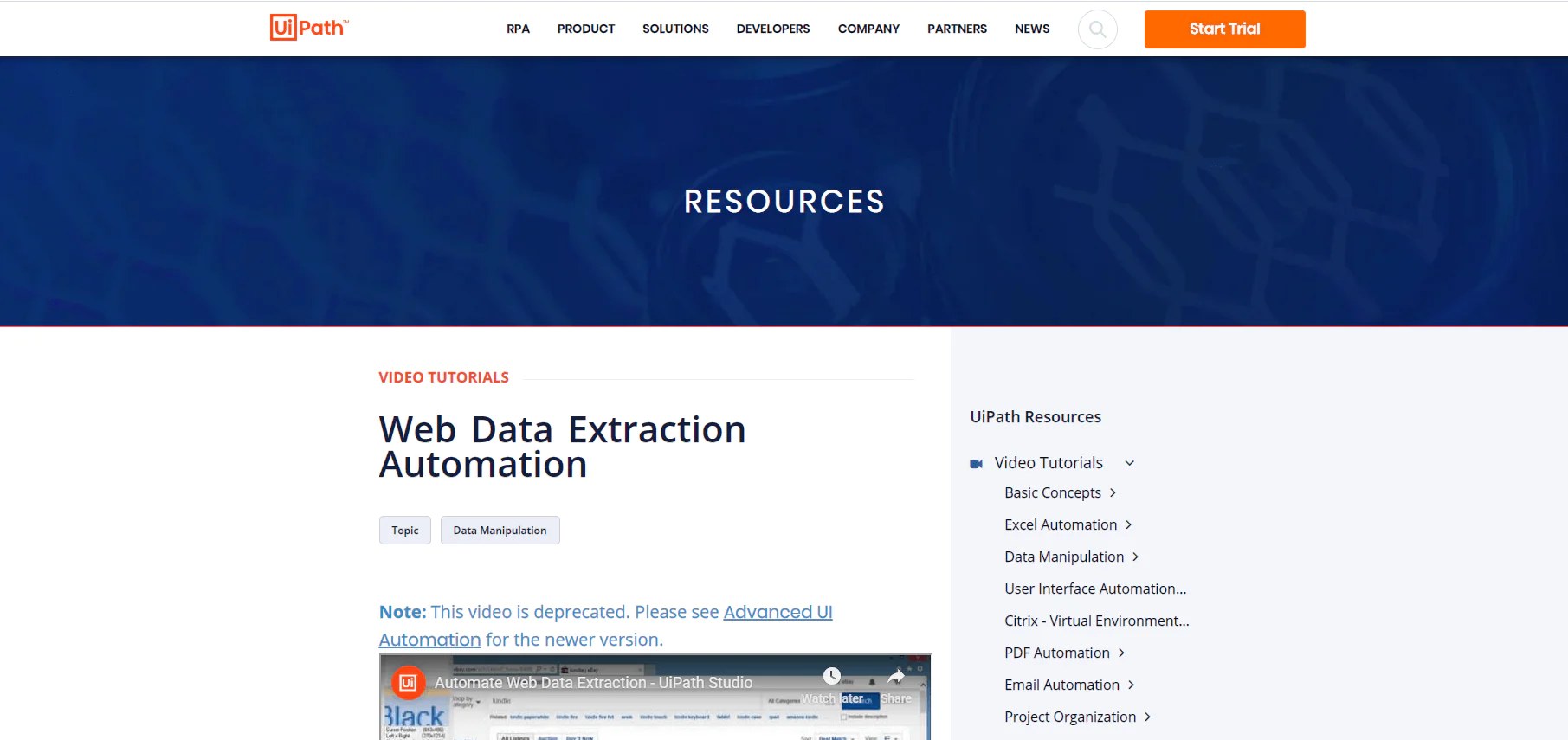

UiPath

UiPath involves a robotic process automation tool that comes free for web scraping incentives. It provides automation for web as well as desktop devices while crawling out third-party apps. You can also install the robotic process automation tool to run it on Windows. The discussed web crawling tool can also extract data from pattern-based web pages as well as tabular web pages.

UiPath offers a large variety of built-in tools for web crawling. Such a method of crawling is very effective while dealing with complex data UIs. The screen scraping tool can efficiently handle all individual or group texts, to a table format consisting of blocks. Also, there is no need to be very capable in programming skills to access the tool but the .NET hacker inside you must have complete command over the data.

How ITS Can Help You With Web Scraping Service?

Information Transformation Service (ITS) includes a variety of Professional Web Scraping Services catered by experienced crew members and Technical Software. ITS is an ISO-Certified company that addresses all of your big and reliable data concerns. For the record, ITS served millions of established and struggling businesses making them achieve their mark at the most affordable price tag. Not only this, we customize special service packages that are work upon your concerns highlighting all your database requirements. At ITS, our customer is the prestigious asset that we reward with a unique state-of-the-art service package. If you are interested in ITS Web Scraping Services, you can ask for a free quote!