How to scrape data with Outwit Hub?

If you want to grow your business, you have to look at what your competitors are doing. These days the best method to analyze the data from your rival’s website is to first extract it and then perform analysis. This is the reason why businesses are inclined towards web scraping. Businesses can use web scraping to collect product information for both their own and similar products to evaluate how it affects their pricing strategy. Companies can use this information to determine the best price for their items to get the most income. It is an important factor for both small and large businesses since it enables you to increase interactions, sales, and success overall.

For web scraping, you can find many web scrapers and tools. In this article, we will learn about Outwit hub and how to use it for web scraping.

What is web scraping?

Web scraping is an automated technique for gathering huge volumes of data from websites. The majority of this data is unstructured in HTML format and is transformed into structured data in a database or spreadsheet so that it can be used in multiple applications. To collect data from websites, web scraping can be done in a variety of methods. These include employing specific APIs, online services, or even writing your code from scratch for web scraping.

The scraper and the crawler are the two components needed for web scraping. The crawler is an artificial intelligence system that searches the internet for the specific data needed by clicking on links. On the other hand, a scraper is a unique tool designed to extract data from the website. The scraper’s design can vary significantly depending on the difficulty and size of the project in order to efficiently and precisely extract the data.

Working of web scrapers

Web scrapers can collect all the information from particular websites or the specific information a user requests. It’s ideal if you describe the data you require so that the web scraper only swiftly retrieves that information. For instance, you might want to scrape an Amazon website to find out what kinds of juicers are offered, but you might only need information on the models of the various juicers and not the feedback from customers.

Therefore, the URLs are first provided when a web scraper needs to scrape a website. Then, all of the websites’ HTML code is loaded. A more powerful scraper might also extract all of the CSS and Javascript parts. The scraper then extracts the necessary data from this HTML code and outputs it in the manner that the user has chosen. The data is typically stored as an Excel spreadsheet or a CSV file, but it is also possible to save it in other formats, such as a JSON file.

Benefits of web scraping

Automation

The first and most significant advantage of web scraping is the creation of technologies that have made it easier than ever to retrieve data from various websites with only a few clicks. Prior to this method, it was still possible to extract data, but it required a laborious and lengthy process.

What a time-consuming procedure it would be if someone had to copy and paste text, photos, or other data every day! Thankfully, modern online scraping techniques make it easy and rapid to extract vast amounts of data.

Generate traffic

Every website owner desires visitors. But how you obtain it is the question. Web scraping provides a massive amount of data that helps you find the solution. By examining information from many websites, such as keywords, metatags, and photos, you may make better use of it.

Better content development can result from data analysis, and better content will draw more visitors. In other words, more people will visit your website. It eventually improves the rating of your website. It is among the best explanations for why we use web scraping.

Helps in developing interactive pages

To boost customer engagement, a page must be interactive. Web scraping has an effect here as well. It provides you with an idea of the forms on the web pages of other websites. It helps you to compare them and create the ideal form that will generate leads and consumers for you.

Visitors are more likely to provide their information and possibly even buy the product if you have a strong table of contents. Additionally, you can create a list of existing or potential clients who could be interested in your offering.

Help in price setting

Do you frequently have trouble deciding how much to charge for your goods? This is where web scraping can be of assistance. It assists you in establishing fair pricing in comparison to competitors in the industry.

Web scraping keeps track of numerous sellers’ products and pricing information. For purposes of affiliation, analysis, and comparison, it gathers a large number of datasets.

Web scraping software, for instance, can browse retail websites and gather sales and pricing history information. Once you have clients, you can cut your price.

What is Outwit Hub?

Outwit Hub is a web data extraction program called is made to automatically extract data from local or internet sources. It detects and captures links, images, documents, contacts, recurrent words, and phrases, and RSS feeds and recognizes, and transforms recurring vocabulary and phrases. It also transforms structured and unstructured data into organized tables that can be exported to spreadsheets or databases. In 2010, the initial version was made accessible. The 9.0 version was made available in January 2020.

This software has a Mozilla-based browser and a sidebar with access to various views with pre-set extractors. In these views, the various components of web pages and text documents are broken down and displayed as tables. The application may extract information pieces, arrange them in tables, and export them in a variety of formats by navigating through a number of links and sequences of search engine results pages.

How to scrape data with OutWit Hub?

Many websites show data in lists or tables without allowing users to download the material. This is a concern, especially for government websites that are meant to provide the general public with access to public information.

That issue can be resolved through OutWit Hub. As an illustration, we’ll take information from the Pennsylvania Supreme Court’s Disciplinary Board of Pennsylvania.

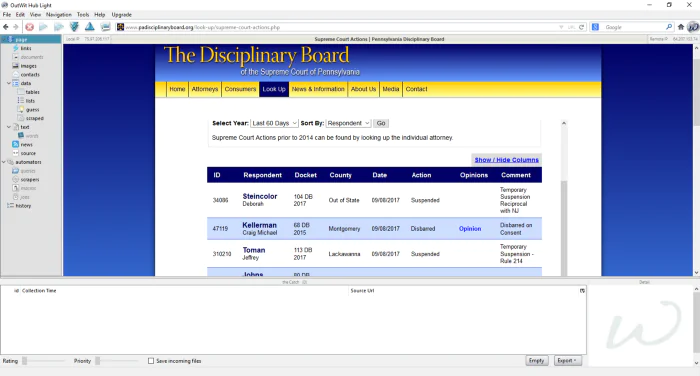

Extracting public data

A list of recent actions taken by the Pennsylvania Supreme Court against state attorneys is displayed. Each attorney’s name, county, and the legal action brought against them are shown in the table (ex., suspension, disbarment).

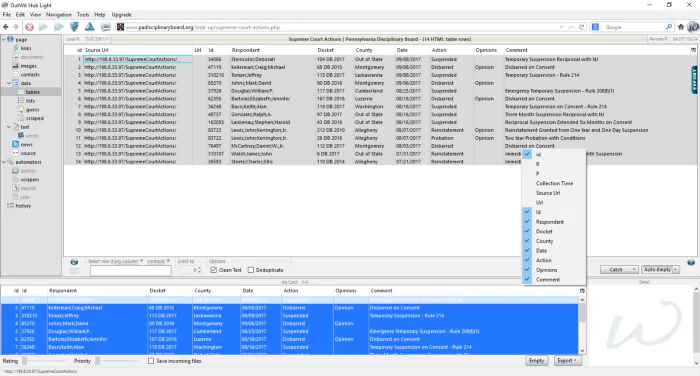

The webpage appears like this in OutWit Hub. On the left side of the browser is an options menu. Click “tables” after selecting “data.”

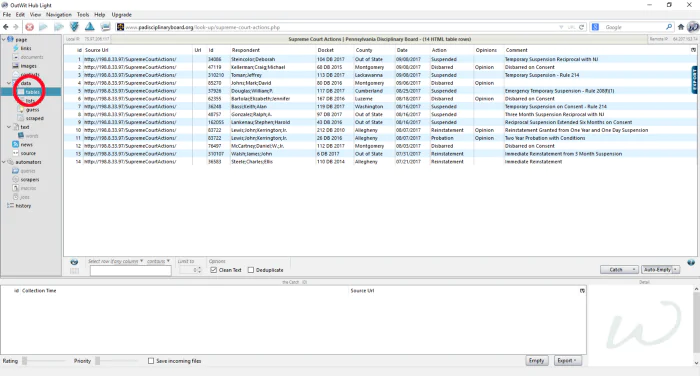

Problem solved! The information has been taken from the table by OutWit Hub.

Since the data are contained in an HTML <table>, we selected the term “tables”. We would select “lists” if the data were nesting in an HTML list (i.e., a <ul> or a< ol>) as opposed to a <table>.

By hitting Ctrl-A (or ⌘ and a on a Mac), we can select all of the data. If we only want a few rows, click them while holding down the shift key.

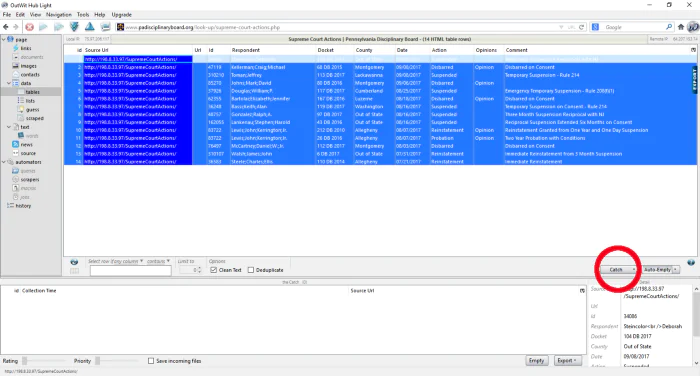

Once we’ve made our choice, click “catch.”

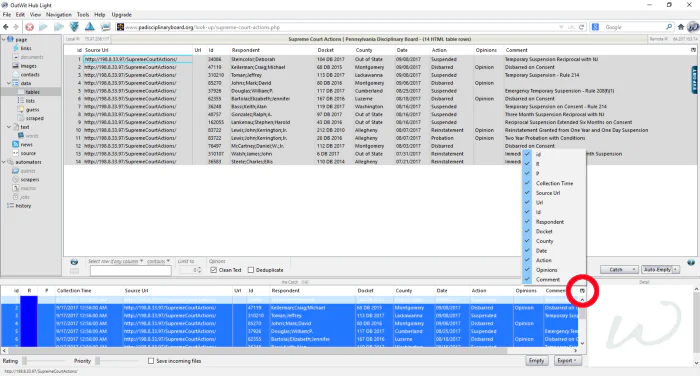

Our data has to be sorted and filtered now. Click the icon that resembles a miniature spreadsheet with a triangle:

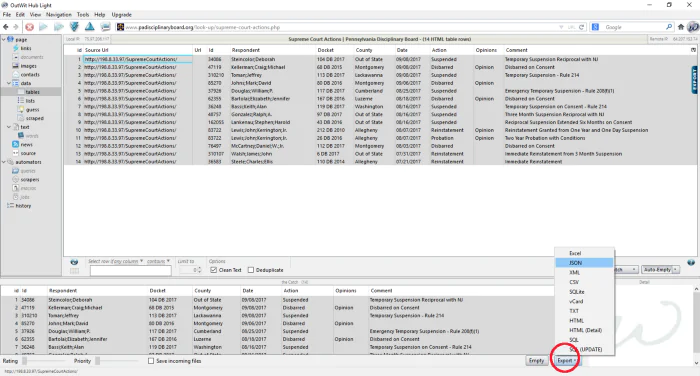

There are some empty data columns. Some people’s information is jumbled. Let’s clear them out.

Finally, select a file format by clicking “export.” Our data can be saved through OutWit Hub in well-liked file types like CSV, HTML, and JSON. Even better, it can insert our data into a SQL database.

Conclusion

Some websites may be incredibly rich with important information. You name it: stock prices, product information, sports statistics, business relationships. If you wanted to access this information, you would either need to use the website’s format or manually copy and paste the data into a new document. In this case, web scraping is extremely useful.

The process of extracting data from a webpage is known as web scraping. This data is gathered and then exported in a way that the user will find more valuable. A spreadsheet or an API, for example. It helps in market analysis. Companies can utilize web scraping for market research. Large volumes of high-quality web-scraped data can be quite beneficial for businesses in assessing customer patterns and figuring out which direction the company should go in the future. Web scraping is done by tools and OutWit Hub is one of the commonly used software. By automatically gathering and arranging data and material from internet sources, OutWit Hub browses the Web for you. It breaks Web pages into their component parts. It automatically navigates across pages, collects information components, and groups them into useful collections.