Top 5 Python Web Scraping Libraries

“We have enough data” is a phrase that doesn’t exist in the context of marketing business and other data science jargon. This happens because the situations keep on shapeshifting and the need for new improved data remains constant. Here is where the power of data scraping comes into play with its powerful advanced technology and techniques to draw more and more information each time. In this blog, we will be more considerably dealing with Python Libraries to perform web scraping. Not only this, we will be specifying all features and benefits to make you choose for yourself which Python Library fulfills all your unique data requirements as an all-rounder.

Python Libraries for Web Scraping

Web Scraping is the process to extract data in an unstructured format from the web and then converting it to a more structured format with the help of special programs and then shifting it to a more accessible format for usage. All top 5 Python Libraries through the lens of Web Scraping are penned down in detail as follows:

Requests (HTTP for Humans) Library for Web Scraping

Let us begin with one of the most basic Python libraries for your web scraping needs, which is ‘Requests’. HTML requests are made to retrieve data from the web page by sending them to the website server. Having achieved HTML content of any web page is the primary step in web scraping.

‘Requests’ a Python Library which is used for making many different types of HTTP requests such as POST, GET, and more. Due to the simplicity of action and easy-to-use mechanism Requests comes with the objective of HTTP for all. The most basic yet important web scraping library is ‘Requests’. It does not parse the retrieved HTML data but for this purpose, we require another library i.e. Beautiful Soup and lxml, etc.

Advantages

Very simple to use.

Basic Authentication.

URLs & International domains.

Request Action.

HTTP(S) Proxy Support available.

Disadvantages

It only retrieves the static data of the web page.

It does not support the parsing of HTML.

JavaScript websites do not apply to this Python Library.

lxml Library for Web Scraping

This web scraping library focuses on parsing HTML retrieved data from web pages. The library’s unique features involve high performance, faster production of HTML and XML parsing.

The library combines Element trees, power, and speed with Python simplicity. The library is suitable for scraping larger volumes of data from any intended website databases. The combination of requests and lxml is most commonly employed to extract and parse data using XPath and CSS selectors.

Advantages

The web scraping library is efficient than most parsers.

It is light weighted.

Employs element trees.

Works with Pythonic API.

Disadvantages

The library is not good with designed HTML.

Documentation (official) is not beginner-friendly.

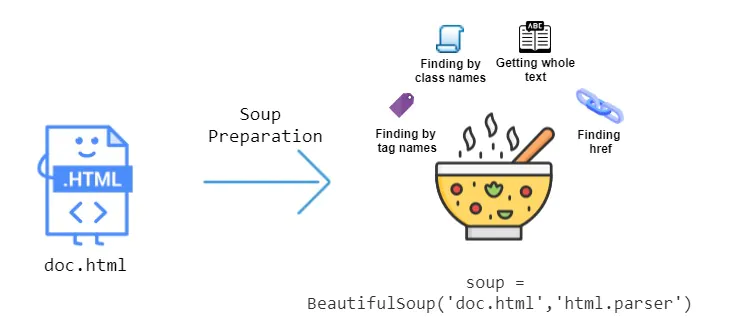

3. Beautiful Soup Library for Web Scraping

Beautiful Soup is the most widely used Python Library there is for web scraping projects. It essentially creates a parse tree for HTML and XML documents. Beautiful Soup is supported by the automatic conversion of documents into Unicode and other outgoing documents into UTF-8.

One of the most basic reasons for using Beautiful Soup is it being relatively easier to work with for all beginner-level users. We can also combine Beautiful Soup Python Library with another parsing library such as lxml. However, it is slower as compared to pure lxml. Beautiful Soup works just fine with poorly designed HTML. The combined use of Requests and Beautiful Soup is more commonly used by professionals for web scraping larger volumes of databases.

Advantages

Beautiful Soup requires a code.

It works better for bulk-level documentation.

It comes with easy to learn interface for beginners.

Beautiful Soup involves Automatic encoding detection.

Disadvantages

Beautiful Soup Python Library is slower than lxml Python Library.

4. Selenium Library for Web Scraping

One or two limitations are hanging with all Python Libraries we have discussed this far. This is because we can’t directly scrape data from dynamic websites. Sometimes the data web page is loaded with JavaScript. In simple terms, If the web page is not static then earlier presented Python Libraries can’t extract data from databases efficiently. Now, what do you do? This is the point at which you employ Selenium for the data extraction purpose!

The Python Library is originally designed to support automated testing of various web applications. Selenium is a web driver which is designed to render web pages with a simple click on the page. With its help, you can easily fill out forms, scroll multiple web pages and do much more. Selenium can run JavaScript to scrape all populated websites you so ever want data from. However, running and loading such largely populated web pages can make its functioning rate slower. It is advised to employ Selenium if one is not short on time.

Advantages

Selenium comes with an easy-to-learn interface for beginners.

The Python Library supports automated web scraping.

It can efficiently scrape dynamically populated web pages unlike most web scraping libraries out there.

It works on Automates web browsers.

Selenium does anything on a web page similar to a person.

Disadvantages

The Python Library functions relatively slower.

It is difficult to setup.

It drains more memory hence requires a higher CPU.

Selenium is termed as not recommended for larger volume data projects.

5. Scrapy

Now it is time to learn the most awaited web-scarping Python Library of all i.e. Scrapy!

If you are into web scraping then it is not possible for you to not have come across this masterpiece. It is not only just a Python Library for web scraping but it is an entire framework for supporting your web scraping needs on one platform. Scrapy is developed by co-founders of Scraping the hub ‘Shane Evans’ and ‘Pablo Hoffman’. This Python Library is your web scraping solution to all heavy-weight data projects. Moreover, you can create pipelines using Scrapy.

Advantages

The Python Library is Asynchronous.

Scrapy offers greater documentation.

It comes with various plugins.

Creates custom level pipelines and middlewares.

It drains less memory hence you can employ a Lower level CPU.

Disadvantages

It involves a steep learning curve.

Scrapy is a professional-level Python Library and is not beginner-friendly.

How ITS Can Help You With Web Scraping Service?

Information Transformation Service (ITS) includes a variety of Professional Web Scraping Services catered by experienced crew members and Technical Software. ITS is an ISO-Certified company that addresses all of your big and reliable data concerns. For the record, ITS served millions of established and struggling businesses making them achieve their mark at the most affordable price tag. Not only this, we customize special service packages that are work upon your concerns highlighting all your database requirements. At ITS, our customer is the prestigious asset that we reward with a unique state-of-the-art service package. If you are interested in ITS Web Scraping Services, you can ask for a free quote!