How to use Scrapingbee for Web Scraping?

The volume of data that is a part of our lives is increasing exponentially. Due to this increase, data analyses have become an integral element of organizations’ operations. Although data is available from many sources, its most important source is the internet. As the field of artificial intelligence, extensive data analysis, and machine learning develop, businesses require data analysts who can crawl the web in ever-sophisticated methods.

Many web scraping tools are accessible currently, but in this post, we’ll discover how to utilize Scrapingbee for scraping web pages.

What is Web Scraping?

Web scraping (or scraping data) is a method to obtain data and content on the internet. The data is typically saved in a local file, so you can access it later and edit and analyze it as required. If you’ve copied or pasted information from a website in the Excel spreadsheet, that’s basically what web scraping services is, however, on a tiny scale.

But, when people speak of “web scrapers,” they usually refer to software programs. Web scraping programs (or “bots”) visit websites, locate relevant pages and extract relevant data. Through automation, they can collect massive amounts of data quickly. This can be a significant benefit in the digital age, in which big data is a reality.

What kinds of data can be gathered from the internet?

If there’s information on a website, theoretically, the data is possible to scrape it! Common types of data collected by organizations include videos, images, text, product information, customer opinions and reviews (on websites such as Twitter, Yell, or Tripadvisor), and prices from websites that compare prices. There are some laws regarding web scraping, so you must read them before starting it.

What is the purpose of web scraping?

Web scraping is a vast field of applications, specifically in the area of data analysis. Market research firms use scrapers to collect data from social media sites or online forums to analyze customer sentiment. Other companies scrape information from websites selling products, like Amazon and eBay, to aid in analyzing competitors.

Additionally, Google regularly uses web scraping to study the quality, rank, and index it’s content. Web scraping allows Google to collect information from the websites of third parties before redirecting the information onto their website (for instance, scraping e-commerce websites for use in Google Shopping).

Many businesses also conduct contact scraping, where they use the internet to search for contact information that can be used to market their services. If you’ve allowed an organization access to your contact list as a condition of using the services they offer, you’ve permitted them to do this.

There aren’t any limitations to the way web scraping can be utilized. It’s dependent on the level of creativity you have and what your ultimate objective is. The possibilities are almost infinite, from listing real estate properties and weather data to performing SEO reviews!

But, it must be noted that scraping websites can also be a source of the dark. Criminals often scrape information such as bank account details or other personal information to carry out fraud, intellectual property theft, and extortion. Knowing the risks is essential before embarking on your web scraping venture. Be sure to stay on top of the laws governing scraping websites.

What are the benefits of web scraping?

Competitor Monitoring

The market for e-Commerce has made an enormous leap over the past decade. But, this online retail industry will continue to expand as the digital world is integrated into our lives and change purchasing habits.

Pricing Optimization

Web scraping can be highly beneficial if you need help setting the price. The issue with optimizing is that we need to gain customers to achieve the need to increase profits.

Remember that consumers will spend more money on a product with more excellent value. It is essential to enhance your services in the retail industry where your competitors are not.

Investment Decisions

Web scraping has been introduced previously in the world of investment. In fact, at times, hedge funds employ web scraping to obtain alternative data to minimize the risk of losing money. It can help identify unanticipated risks and possible investment opportunities.

Investments are complex because they typically involve an array of steps before a good decision is made, starting with creating an idea for a thesis, testing it, and finally, studying. The most effective method to evaluate the investment thesis is by analyzing data from the past. This will give you insights into the causes of your past successes or failures, the pitfalls you could avoid, and the future investment returns you may achieve.

For instance, web scraping can extract historical data more efficiently, and you could use the data to feed into a machine-learning database to aid in training models. In the end, investment firms that use big data increase the precision of their analysis results for more effective decision-making.

Time Efficient

The benefit of web scraping is that it is time-efficient and requires minimal maintenance. For instance, downloading large files can take hours, and going through every row by hand can take up your entire month. With web scraping, you’ll be able to make your computer complete the manual work for you in a matter of minutes, which means you’ll have more time for what you’d like to do.

Complete Automation

Many web scraping tools are automated using Big Data Analytics and Machine Learning. One of the benefits of automation is that it’s not exhausting or tiring. It doesn’t need any breaks and never loses focus as long as it follows the instructions.

What is Scrapingbee?

ScrapingBee is an internet scraping tool designed by Kevin Sahin and Pierre de Wulf; Kevin is a web scraping expert and the author of the Java web scraping book. Pierre is an expert in data science. ScrapingBee makes scraping the internet easy and provides an API, so you don’t have to fret over programming languages. It is compatible with every language. It has solved many issues, such as headless Chrome browsing on the server and changing the proxy IP dynamically so that you ensure that it is never blocked. ScrapingBee waits for 2000 milliseconds before returning its Source code since it scrapes HTML-based web pages like a traditional browser in a headless environment. Here are some things you need to know before starting.

Getting Started

Visit the ScrapingBee website to sign up for a free account. They also offer a free plan that includes 1,000 free API calls. That’s plenty to get acquainted with and try out the API.

Go to the dashboard, and take a copy of the API key we will need later in this guide. ScrapingBee offers multi-language support, which means you can use the API key to integrate into your future projects.

Installation

Scrapingbee provides REST API API support which means it is compatible with any programming language, including CURL, Python, NodeJS, Java, Ruby, Php, and Go. We will use Python with the request framework and BeautifulSoup for further scraping. Install them with PIP according to the following steps:

# Install the Python Requests library:

pip install requests

# Additional modules we needed during this tutorial:

pip install BeautifulSoup

How to use Scrapingbee for web scraping?

Quick start

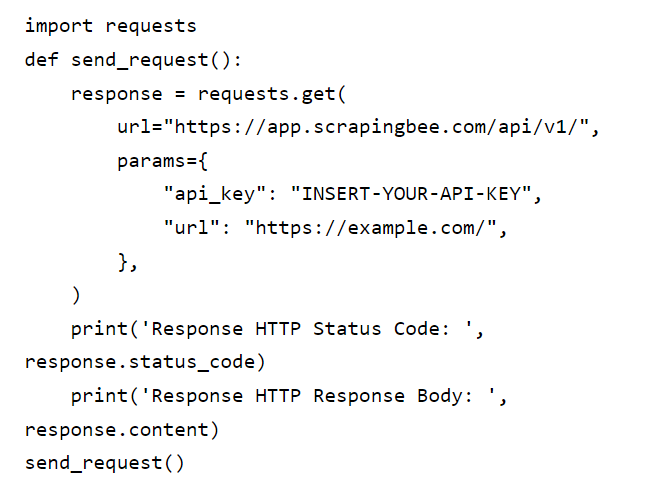

Please make use of the following code to activate the ScrapingBee Web API. Here we create a Request call using some parameters, URL, and API key, and in return, this API replies with an HTML page of the URL we want to target.

Python

We can use BeautifulSoup to improve this output’s readability by simply including a prettified code.

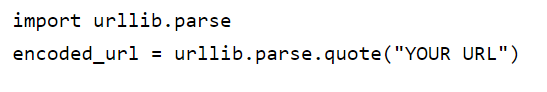

Encoding

You can encode the URL that you wish to scrape using urllib.parse as follows:

ScrapingBee API can also support additional languages, including:

Java

import java.io.IOException;

import org.apache.http.client.fluent.*;

public class SendRequest

{

public static void main(String[] args) {

sendRequest();

}

private static void sendRequest() {

// Classic (GET )

try {

// Create request

Content content = Request.Get(“https://app.scrapingbee.com/api/v1/?api_key=YOUR-API-KEY&url=YOUR-URL”)

// Fetch request and return content

.execute().returnContent();

// Print content

System.out.println(content);

}

catch (IOException e) { System.out.println(e); }

}

}

PHP

<?php

// get cURL resource

$ch = curl_init();

// set url

curl_setopt($ch, CURLOPT_URL, ‘https://app.scrapingbee.com/api/v1/?api_key=YOUR-API-KEY&url=YOUR-URL’);

// set method

curl_setopt($ch, CURLOPT_CUSTOMREQUEST, ‘GET’);

// return the transfer as a string

curl_setopt($ch, CURLOPT_RETURNTRANSFER, 1);

// send the request and save response to $response

$response = curl_exec($ch);

// stop if fails

if (!$response) {

die(‘Error: “‘ . curl_error($ch) . ‘” – Code: ‘ . curl_errno($ch));

}

echo ‘HTTP Status Code: ‘ . curl_getinfo($ch, CURLINFO_HTTP_CODE) . PHP_EOL;

echo ‘Response Body: ‘ . $response . PHP_EOL;

// close curl resource to free up system resources

curl_close($ch);

?>

Let’s scrape information from OLX by using the ScrapingBee API

We’ll utilize Python; an elementary program made up of the Request API, Beautifulsoup, and SrapingBeeAPI to request URLs. In addition, we will remove all smartphones, and specifically tablets, from OLX by their names and cost:

1 Import the modules.

#IMPORT MODULES

Import requests

from bs4 import BeautifulSoup

from time import sleep

2 Initialize the URL and API parameter to Our Web API, and it will provide the web page’s source code.

KEY = ‘Your_API_key’

URL = ‘https://www.olx.in/tablets_c1455’

params = {‘api_key’: KEY, ‘url’: URL, ‘render_js’: ‘False’}

3 Request for ScrapingBee web API to return the scraping within Variable “r.”

r = requests.get(‘http://app.scrapingbee.com/api/v1/’, params=params, timeout=20)

Inspect OLX and find where the product name and prices are by going to this URL: https://www.olx.in/tablets_c1455. Anywhere on the page, click Right Click-> Inspect to open the page in developer mode and start inspecting the page using the Cursor icon at the left corner above the source code.

5 Let’s look into the API by returning status codes from it. Then, use Beautiful Soup to scrape all categories having an ‘EIR5N’ name. “EIR5N’ and

if r.status_code == 200:

html = r.text

soup = BeautifulSoup(html, ‘lxml’)

links = soup.select(‘.EIR5N’)

6 Loop inside the classes and find the product name and price by using the command find(), find all the span tags where data-aut-id=itemTitle and itemPrice

for span in links:

product_name = span.find(‘span’, {‘data-aut-id’: ‘itemTitle’})

print(product_name.text)

price = span.find(‘span’, {‘data-aut-id’: ‘itemPrice’})

print(price.text)

7 Full code

import requests

from bs4 import BeautifulSoup

from time import sleep

def main():

KEY = ‘G2B2GSAPF1LTBJBAR7F0UT8H0VSLC6V7V6EGJRO3MFWFO3EH’

URL = ‘https://www.olx.in/tablets_c1455’

params = {‘api_key’: KEY, ‘url’: URL, ‘render_js’: ‘False’}

r = requests.get(‘http://app.scrapingbee.com/api/v1/’, params=params, timeout=20)

if r.status_code == 200:

html = r.text

soup = BeautifulSoup(html, ‘lxml’)

classes = soup.select(‘.EIR5N’)

for span in classes:

product_name = span.find(‘span’, {‘data-aut-id’: ‘itemTitle’})

print(product_name.text)

price = span.find(‘span’, {‘data-aut-id’: ‘itemPrice’})

print(price.text)

main()

Conclusion

In this course, we’ve learned about the ScrapingBee API, an API that is used to facilitate Web scraping. This API is different because it offers Javascript rendering of web pages that require tools such as Selenium which supports headless browsing. Javascript rendering is dependent on the DOM model. We have also seen an instance where we scraped the name of the product and its price from OLX by using this API.

Keep in mind that ScrapingBee isn’t an application for scraping but an API for the web that can work with other scraping scripts when there are limitations regarding websites. Hence, we require an option that will provide us with the results and not be blocked. ScrapnigBee API can make a single request 1,000 times without being blocked. It can return code sources exceptionally quickly and is extremely simple to utilize. It is possible to read its official instructions for more information on this API.